Geometry nodes allow us to manipulate our objects procedurally in almost anyway shape or form that we desire. This can range from the shape of the model itself to its transforms, such as its rotation and scale.

We can manipulate the scale of our model by using various nodes such as the scale parameter of the transform node, the scale vector node, as well as the scale elements and scale instances nodes to be able to scale our objects in various ways.

Each method of scaling will affect our object in a slightly different way, so we will need to learn how each of these methods affects our geometry and when they should be used to scale our model.

Manipulating The Scale Of An Object Using The Transform Node

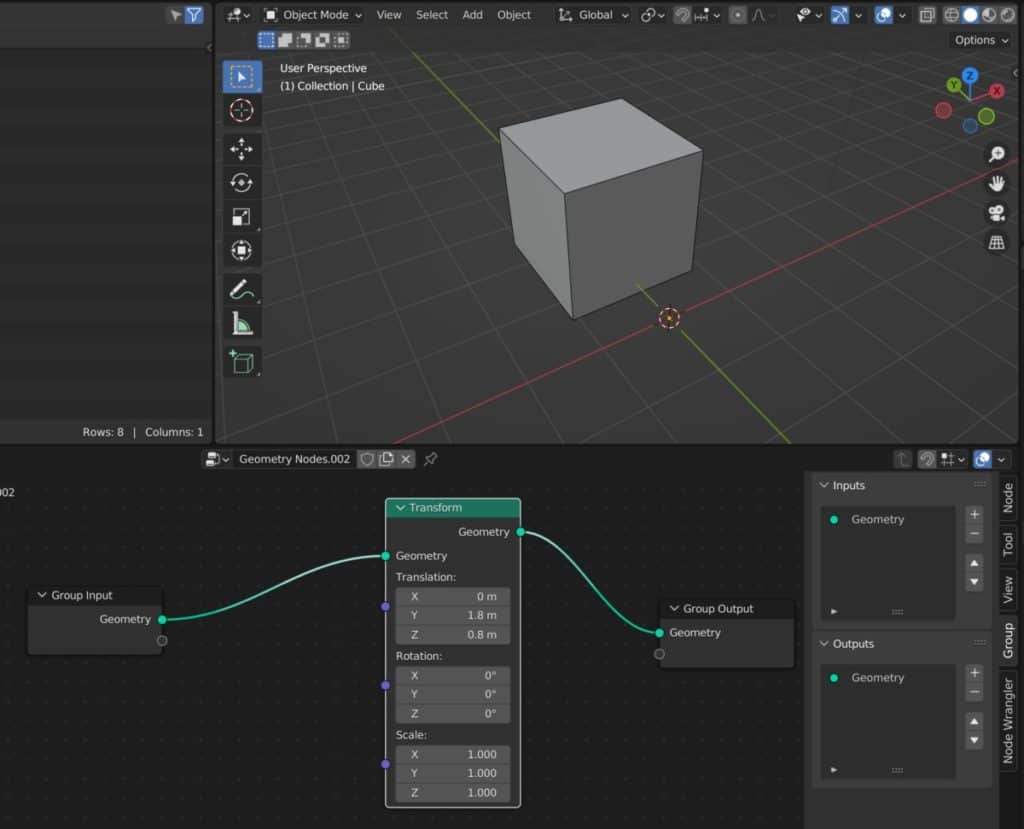

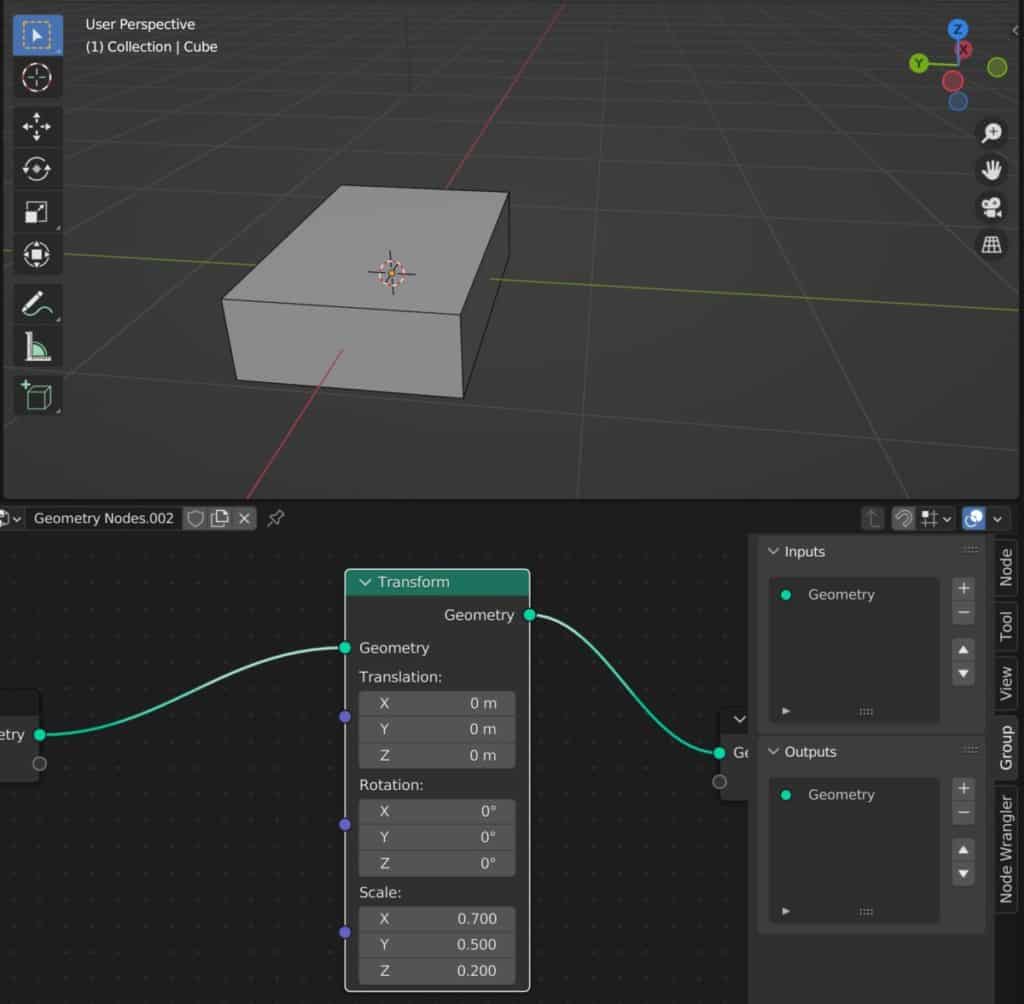

The most fundamental method for scaling our objects using geometry nodes is to use the scale parameter that is found in the transform node.

The transform node is very similar to what you would see in the side panel of the 3D viewports, as it will contain the data for the location, rotation, and scale of our object.

The key difference here is that when working with the transform node we are working with the mesh data rather than the object data. So if we were to manipulate the location value, for example of our transform node, then our object’s geometry would move but the object origin would remain in place.

Within the transform node is the scale parameter and this works in largely the same capacity as the scale tall in the 3D viewports.

Simply manipulate the scale on the X, Y, and Z axes so that you can manipulate the scale of your geometry.

(Insert Scali

Isolating Your Channels For The Scale

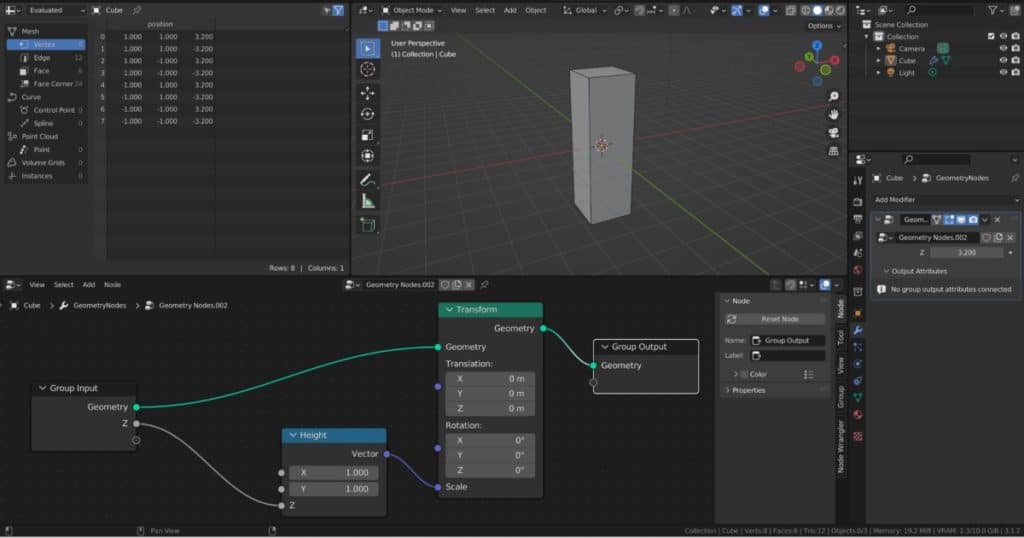

There are ways in which we can further gain control over these specific channels of our scaling. For example, let’s say we wanted to be able to scale on the Z-axis by itself using our geometry nodes modifier.

To do this, we would first of all need to detach our seed channel from our scale parameter. Add a combine XYZ node to your setup and connect the vector output to the scale input.

Set the values of each axis in our combine XYZ note to one. Then connect the Z input to the group input node, exposing it to the modifier.

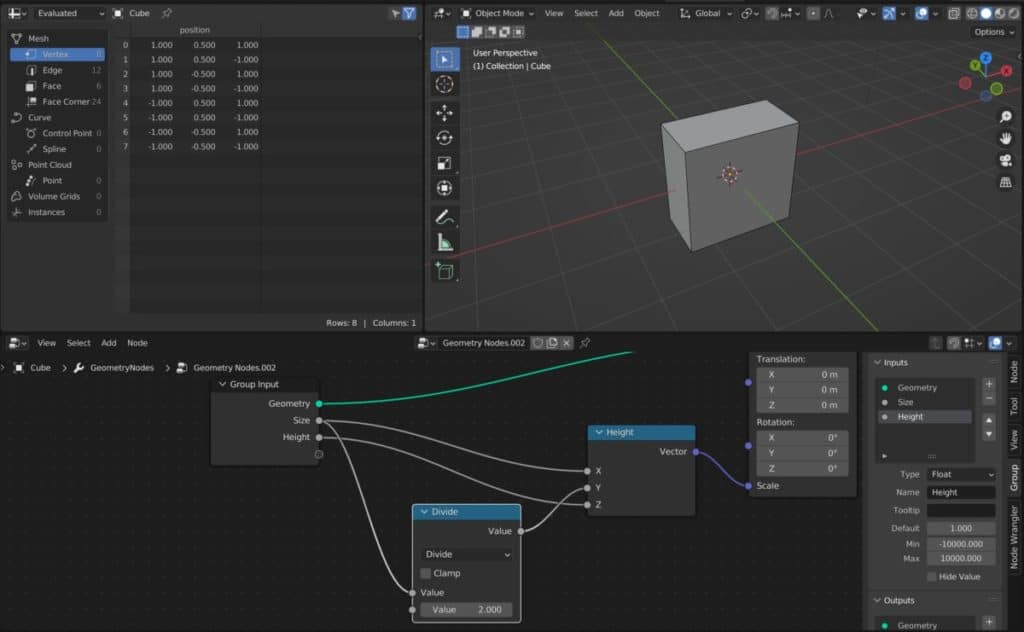

We can also use math nodes to influence each of our channels. For example, let’s say I wanted to create a shape where the X value for the scale would always be doubled as of the Y value. In other words, the width would be twice as long as the length.

To do this, I could connect both the X&Y channels to a new socket for the group input node and name it as size.

We could then introduce a math node such as the divide node and attach it to the noodle that connects to the Y value.

Then we set the divide node to be equal to two, which means that for whatever value we have in our main modifier for the size, that value will be used for the X-axis, which would be the width, and then we would have that value divided by two to calculate the length.

Scaling The Individual Elements Of The Model

While the transform node allows us to manipulate the scale of the entire mesh, we will at times want to be able to scale specific parts of the mesh. We will also want to be able to scale each of our faces independently of each other, which we can do so with the scale elements node.

In geometry nodes, when we use the term elements, we are referring to each individual point, edge or vertex on our mesh. It can also be used for other types of data like curve data, where the elements refer to the individual control points used to construct our curves.

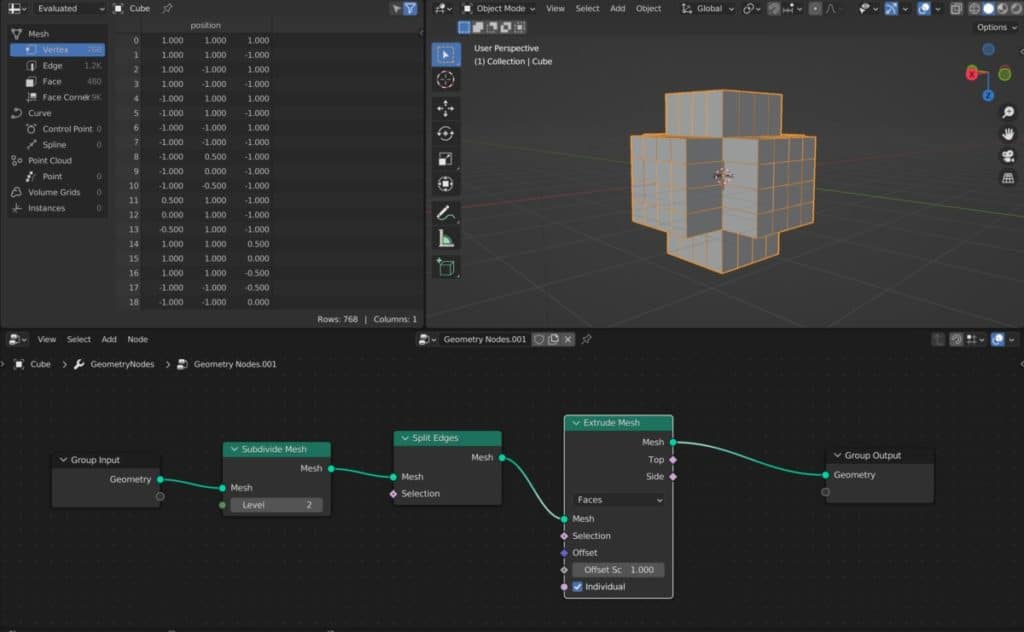

For example, let’s say we wanted to create an abstract effect where we can scale the individual elements of our objects after they have been initially extruded.

Below we have a set up where we have extruded out our geometry having splits the individual edges.

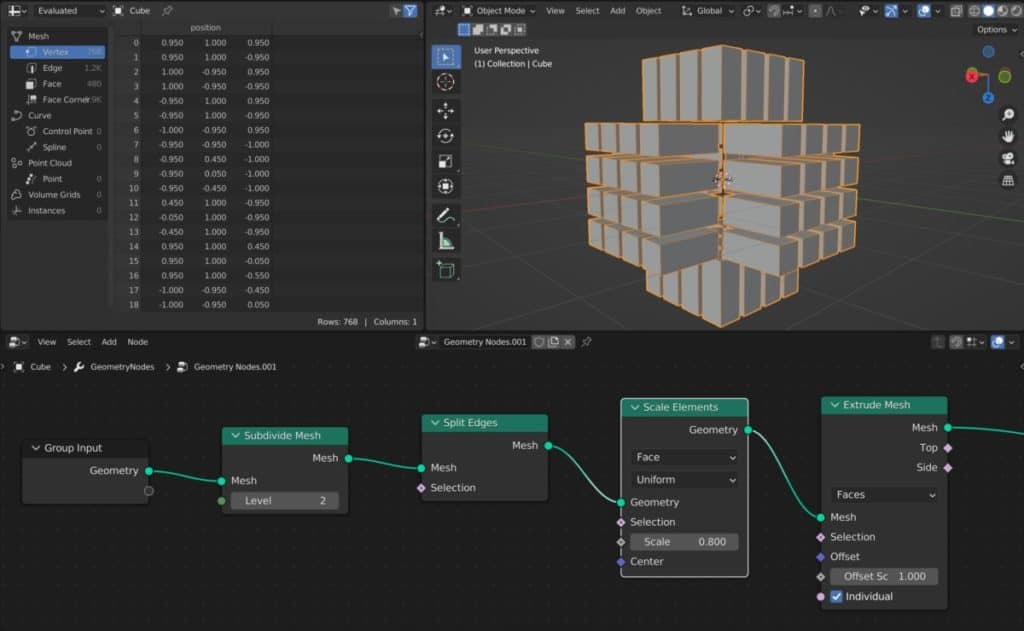

We now want to be able to scale down the extruded faces so that we can see the gap in between them. To do this, we’re going to introduce the scale elements node and then position it before our extrude mesh node.

Once we do this, will be able to manipulate the scale value of the scale elements node and reduce the size of the individual faces that will be extruded.

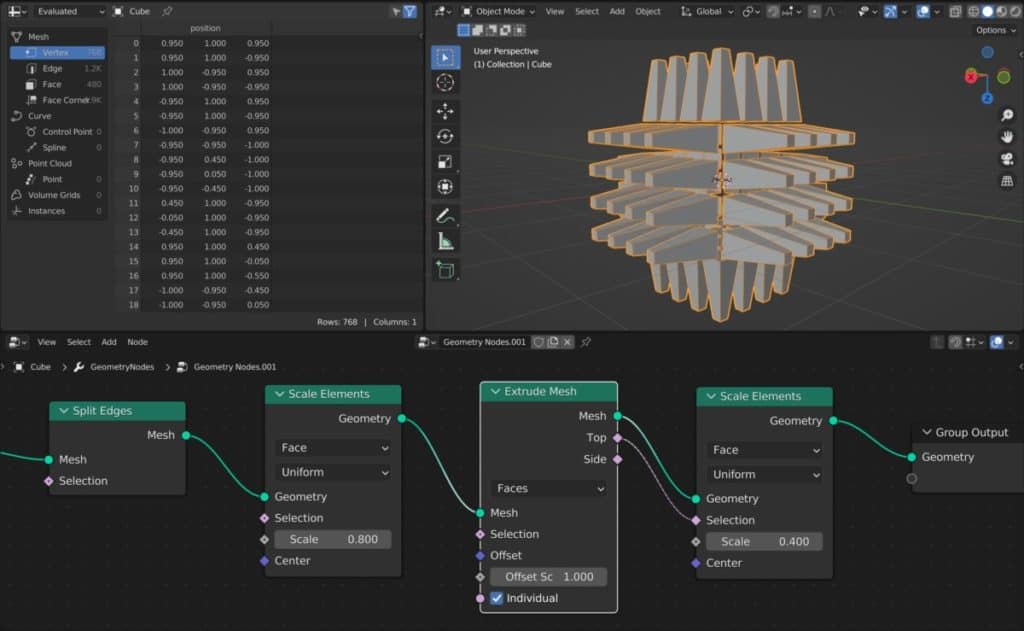

We can use our scale elements node in a wide variety of situations. Even in this example, we could use the scale elements no different way.

With scale elements, we can define a selection of faces that we want to scale down. In this example, I’m going to want to scale down all of the top faces that were extruded.

To do so, I’m going to add a second scale elements no to this setup and position it after the extrude mesh node.

Then I’m going to connect the top output of the extrude mesh node and use that as the selection for my second scale elements before reducing the scale value.

If you want to learn more about Blender you can check out our course on Skillshare by clicking the link here and get 1 month free to the entire Skillshare library.

Control The Scale Using The Position Attribute

A common means of determining which parts of your model are going to be scaled is to use the position attribute to call your selection.

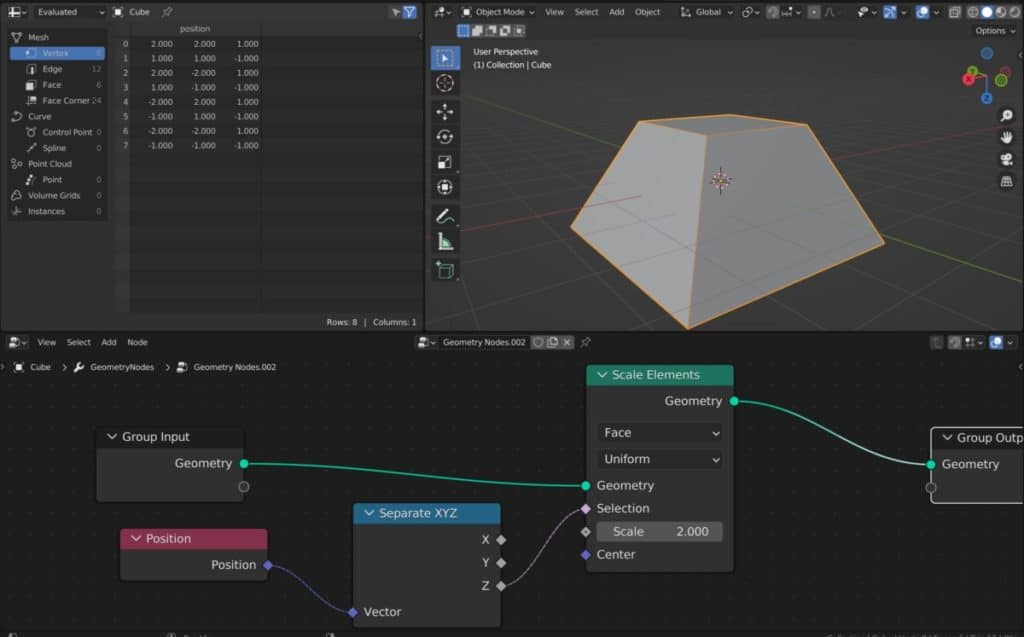

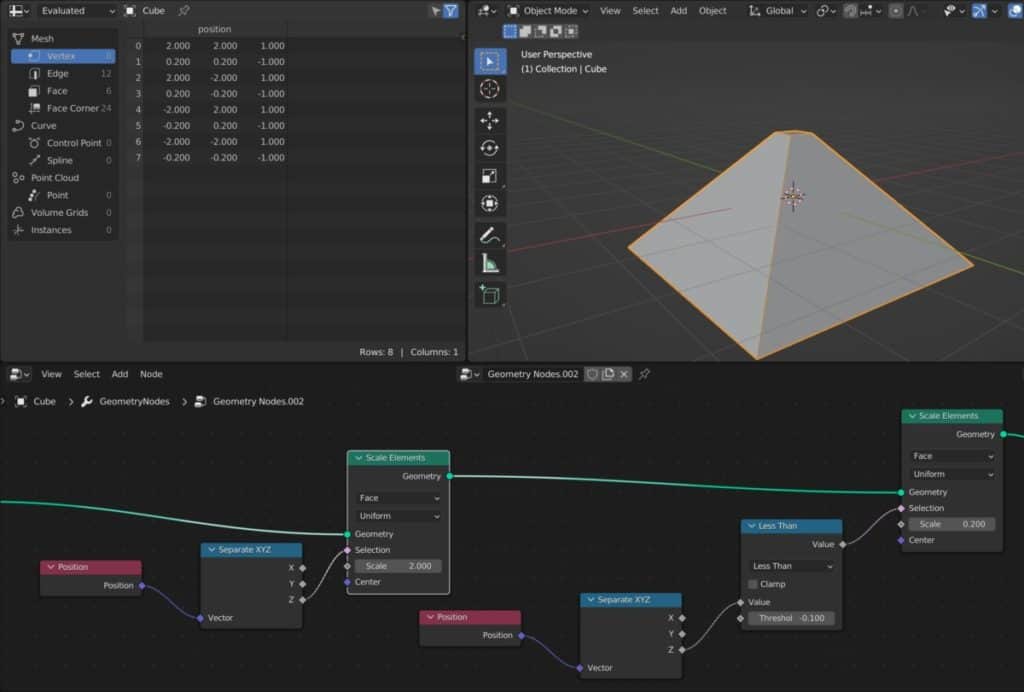

In our next example, we are going to create a pyramid shape form a basic cube by scaling up the bottom half of our cube and then scaling down the top half. We’re going to do this by determining the position of our faces and points.

The first thing we do in our example is at a scale elements node, which by default is going to scale the entire model uniformly, much like the scale parameter would in the transform node.

We would then need to scale down based on position data. We can call our position attributes by adding the position node to our set up and then connecting it to our selection.

Since our example requires us to focus on the Z axis instead of the X&Y axis, we will want to separate our channels so that we are only using the Z axis to determine our selection. In which case we’re going to add a separate XYZ node.

If we don’t increase the scale, you will notice how the bottom half of our model is affected by the scale elements node. By using this setup we are telling Blender to scale up all of the faces that have a value equal to 0 or less on the Z axis.

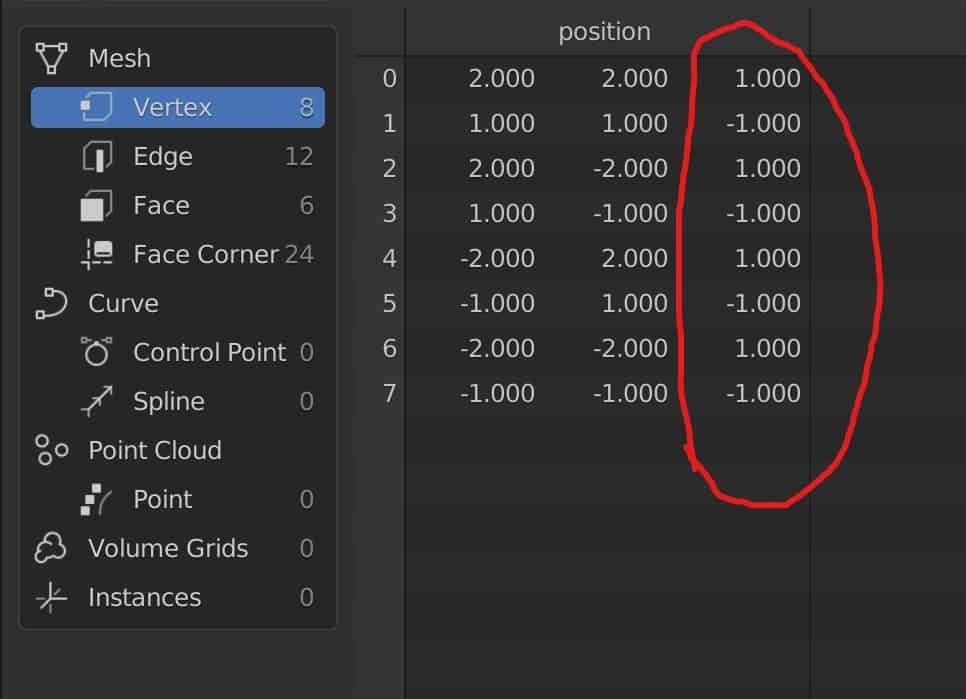

If we were to take a closer look at the spreadsheets, you would see that’s the position attribute is associated to the vertex data and that half of our vertices have a value of one in the channel.

All of our vertices that do not have a value of one in the Z channel are affected by the scale as a result of our setup.

We cannot use this information to scale down the top vertices of our cube to create more of that pyramid like structure.

Adding a second scale elements node and repeat the process of the first calling the position attributes and isolating the Z channel using the separate XYZ node.

The only difference is that we now need to change the formula that blender is using so that its scales the top vertices rather than the bottom ones.

One way of doing this is to use a math node such as the greater than and less than operations.

In our example, we want to separate the bottom vertices from our selection, so we going to introduce a less than node and set the value to be -0.1.

This will now allow us to scale the top vertices of our face using this second scale elements node, while still being able to use the first scale elements node to manipulate the bottom vertices.

When sculpting or texture painting in Blender the best method is to always use a graphics tablet. But these come at many different price points and forms. If you want to get started with sculpting using a graphics tablet then we recommend this as your starting point. It served us well for over 4 years before we upgraded to a more expensive tablet ourselves.

How To Scale Instances On Our Geometry Nodes?

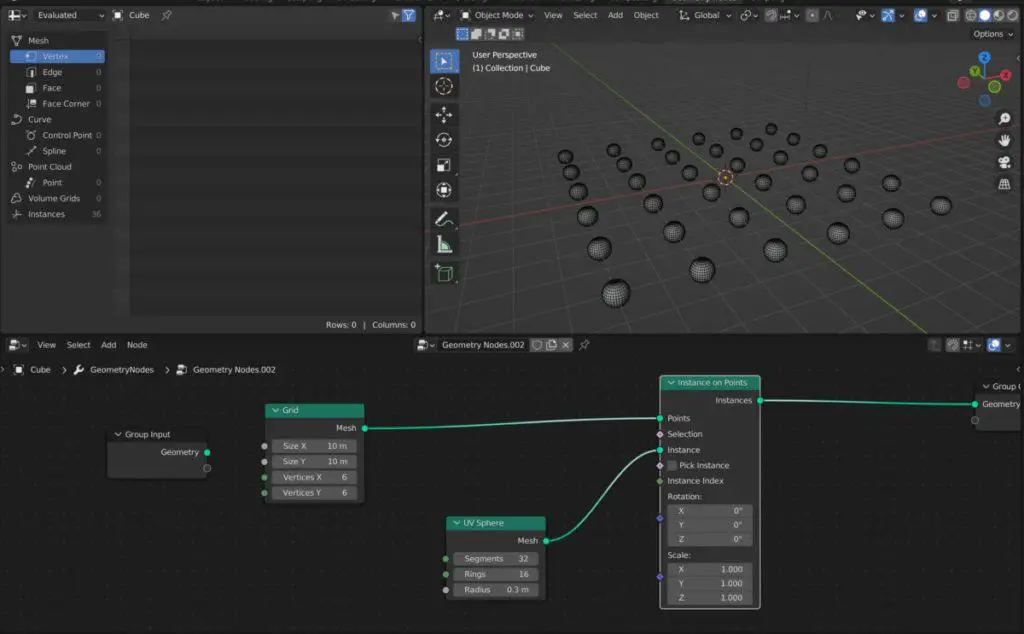

When geometry nodes was first introduced, its specialise in the ability to place multiple instances of existing objects onto the current model. In other words, it was good for situations where we wanted to apply the same model multiple times to an environment such as grass or rocks in a field.

Instead of using something like scale elements to manipulate the scale of these instances, we’re going to need a slightly different node, which is the scale instances node.

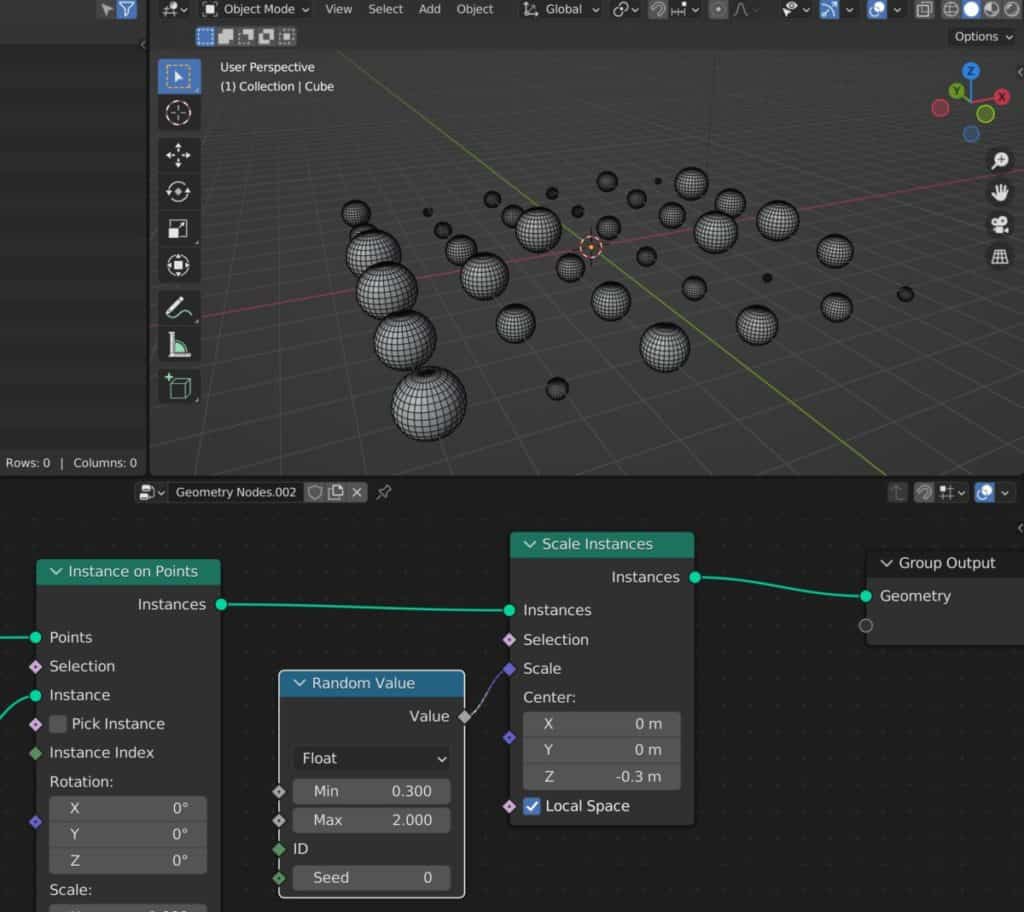

Below we have a set up where we have created a simple primitive object that has been distributed across a plane.

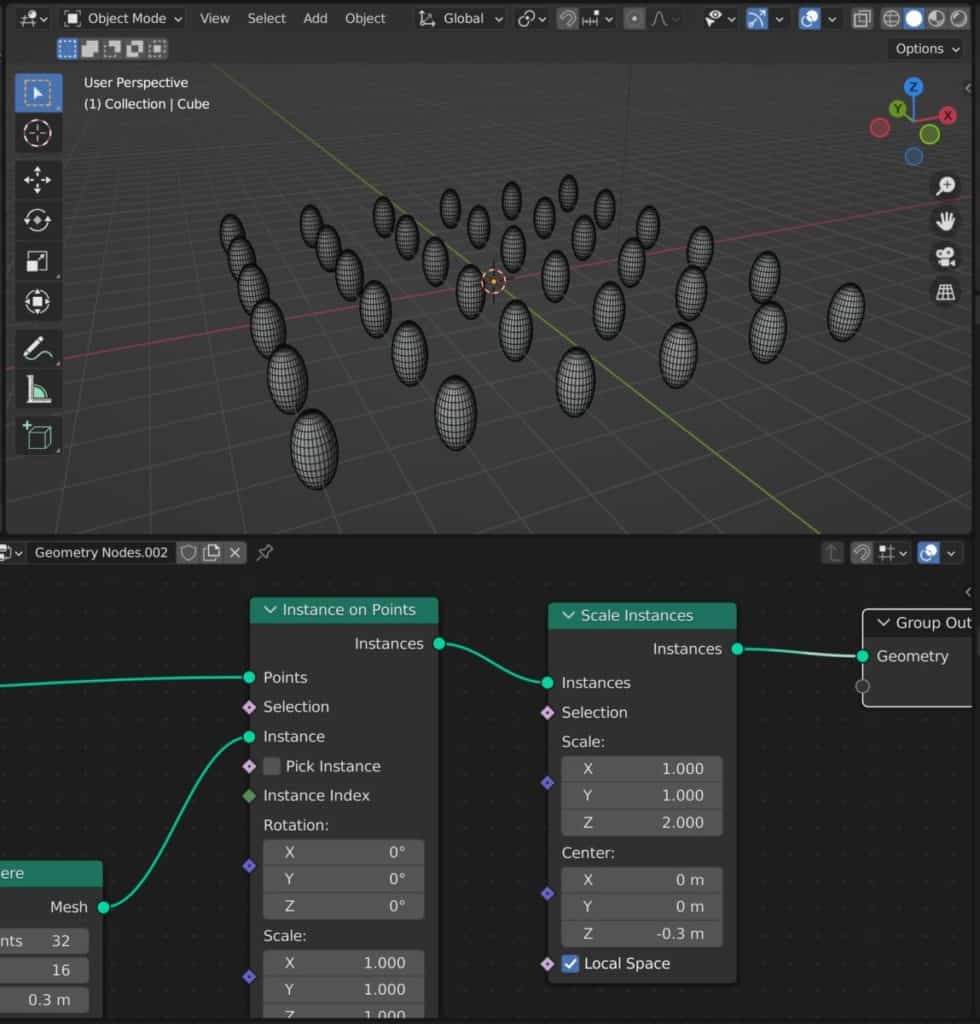

To successfully scale our instances, we can use these scale instances node instead of the scale elements node and attach it after the instanced on points node.

Using Sky will instances, we will be able to scale on our free axes independently and even be able to define the origin or the centre point where we are going to base our scale from.

In our example, we have lowered the centre point on the Z axis and then scaled up on the same axis to stretch out our individual UV spheres.

Randomizing The Scale Of Instanced Objects

If we want to take things one step further, then we can even randomise the scale of our instance geometry.

An easy way to do this is to introduce a random value node to our set up. For our example, we are going to randomise the scale on all three axes at the same time.

Introduce a random value node to your setup and then connect the random value to the scale parameter.

You can manipulate the minimum and maximum values. In our example we are using values anywhere from 0.3 to 3.0 for our scale.

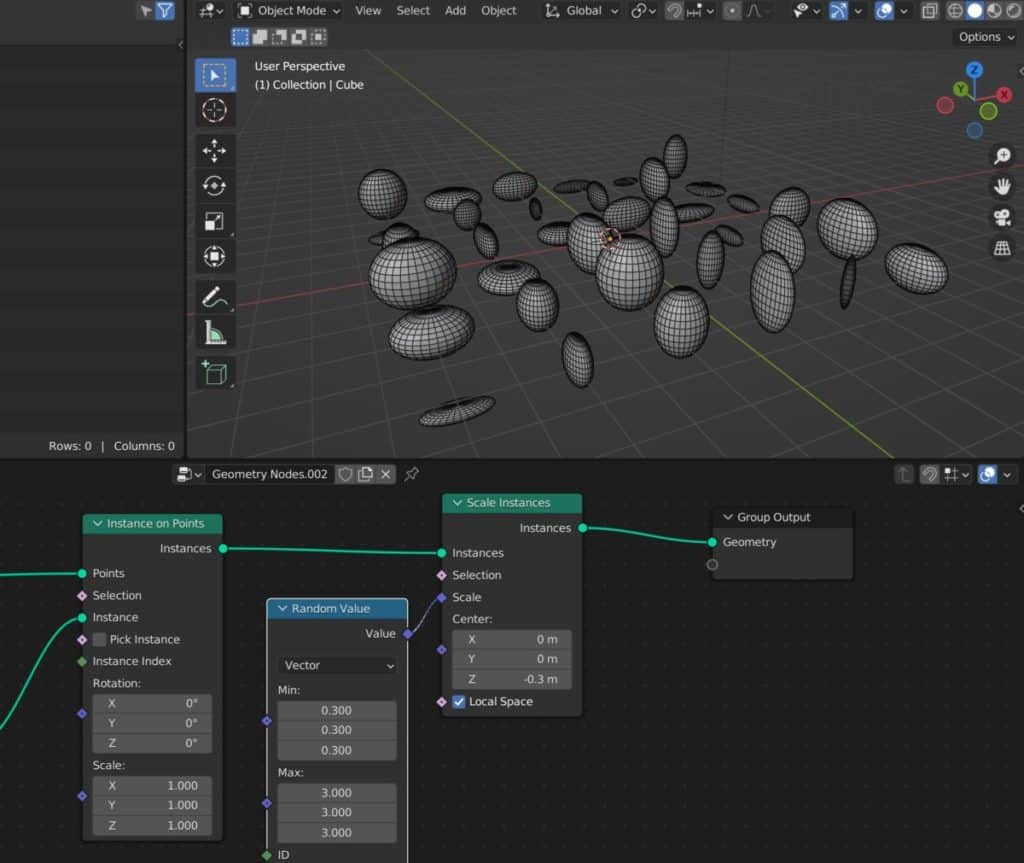

We can also use the random value node to scale each of our free channels by a different value. To do this, we would need to change the type of data that the random value is generating. Currently it is using a float value which is going to be applied to each of the three channels.

The next step we can then exchange the type to our vector within the random value node. This would require us to reconnect them and value node 2 hour scale instances.

Then we will be able to generate our own values for the minimum and maximum scale of each axis.

Once we have applied our values, we will be able to see in our 3D view port that our instances are all scaled differently on each axis thanks to the random value node.

We hope this clarifies some of the methods that you can use for scaling your elements and instances as well as entire objects using the geometry node system.

Thanks For Reading The Article

We appreciate you taking the time to read through the article and we hope that you have been able to locate the information that you were looking for. Below we have compiled a list of additional topics that are available for you to view and learn more about Blender.

-

Mechanical Parts: Blender Modelling Guide

Detailed guide to modeling mechanical parts with precision in Blender.

-

Subsurf Modelling: Hard Surfaces in Blender

Achieving high-quality hard surfaces with Subsurf Modelling techniques.

-

Sharp Edges in Blender with Crease Sets

Utilizing crease sets for maintaining sharp edges in subsurf modeling.